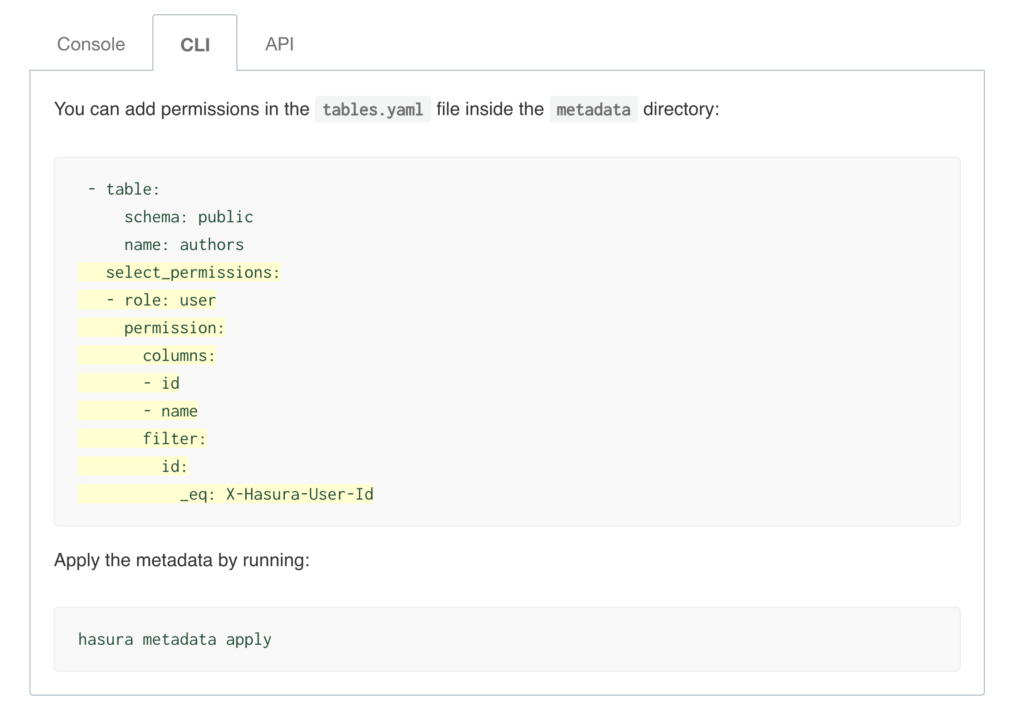

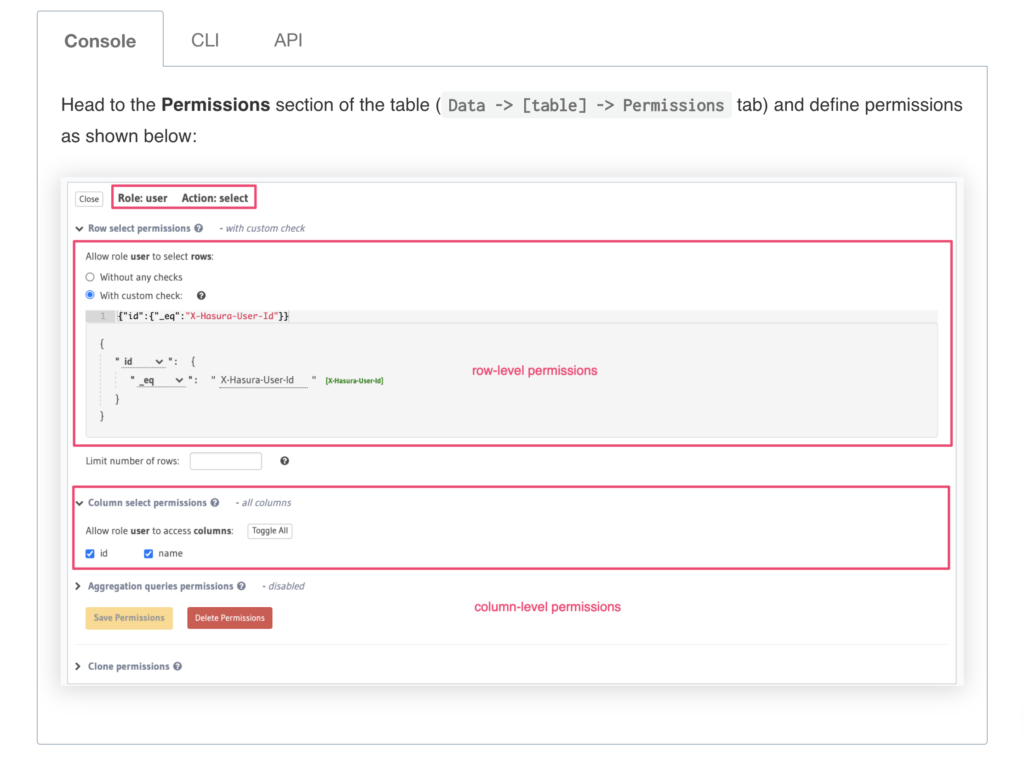

Hasura allows you to define role-based access control rules for each of the models/tables that you use. Access control rules help in restricting querying on a table based on certain conditions.

Python Advanced – Numpy Arrays

Introduction

NumPy (Numerical Python) is the foundational library for numerical computing in Python. If you’ve worked with data science, machine learning, image processing, or scientific computing in Python, you’ve almost certainly used NumPy — whether directly or through libraries built on top of it like pandas, scikit-learn, TensorFlow, and OpenCV.

Here’s why NumPy matters:

- Performance — NumPy arrays are stored in contiguous memory blocks and operations are implemented in optimized C code. This makes NumPy 10x to 100x faster than equivalent Python list operations.

- Vectorized operations — You can perform element-wise computations on entire arrays without writing explicit loops, leading to cleaner and faster code.

- Foundation for the ecosystem — pandas DataFrames, scikit-learn models, matplotlib plotting, and TensorFlow tensors all rely on NumPy arrays under the hood.

- Broadcasting — NumPy’s broadcasting rules let you perform operations on arrays of different shapes without manually reshaping or copying data.

- Rich mathematical toolkit — Linear algebra, Fourier transforms, random number generation, statistical functions — NumPy has it all built in.

In this tutorial, we’ll go deep on NumPy arrays — from creation to manipulation, from indexing to linear algebra. By the end, you’ll have a solid, practical understanding of the library that underpins nearly all of Python’s data stack.

Installation

NumPy is available via pip. If you don’t have it installed yet:

pip install numpy

If you’re using Anaconda, NumPy comes pre-installed. You can verify your installation:

import numpy as np print(np.__version__)

The convention of importing NumPy as np is universal in the Python ecosystem. Stick with it — every tutorial, Stack Overflow answer, and library documentation assumes this alias.

Creating Arrays

NumPy arrays (ndarray objects) are the core data structure. There are several ways to create them, each suited to different situations.

From Python Lists — np.array()

The most straightforward way to create a NumPy array is from an existing Python list or tuple:

import numpy as np

# 1D array

a = np.array([1, 2, 3, 4, 5])

print(a)

# Output: [1 2 3 4 5]

# 2D array (matrix)

b = np.array([[1, 2, 3],

[4, 5, 6]])

print(b)

# Output:

# [[1 2 3]

# [4 5 6]]

# 3D array

c = np.array([[[1, 2], [3, 4]],

[[5, 6], [7, 8]]])

print(c.shape)

# Output: (2, 2, 2)

# Specifying data type explicitly

d = np.array([1, 2, 3], dtype=np.float64)

print(d)

# Output: [1. 2. 3.]

Zero-Filled and One-Filled Arrays — np.zeros(), np.ones()

When you need arrays pre-filled with zeros or ones (common for initializing weight matrices, accumulators, or masks):

# 1D array of zeros zeros_1d = np.zeros(5) print(zeros_1d) # Output: [0. 0. 0. 0. 0.] # 2D array of zeros (3 rows, 4 columns) zeros_2d = np.zeros((3, 4)) print(zeros_2d) # Output: # [[0. 0. 0. 0.] # [0. 0. 0. 0.] # [0. 0. 0. 0.]] # 1D array of ones ones_1d = np.ones(4) print(ones_1d) # Output: [1. 1. 1. 1.] # 2D array of ones with integer type ones_int = np.ones((2, 3), dtype=np.int32) print(ones_int) # Output: # [[1 1 1] # [1 1 1]] # Full array with a custom fill value filled = np.full((2, 3), 7) print(filled) # Output: # [[7 7 7] # [7 7 7]] # Identity matrix eye = np.eye(3) print(eye) # Output: # [[1. 0. 0.] # [0. 1. 0.] # [0. 0. 1.]]

Ranges and Sequences — np.arange(), np.linspace()

np.arange() works like Python’s range() but returns an array. np.linspace() creates evenly spaced values between two endpoints — extremely useful for plotting and numerical methods.

# arange: start, stop (exclusive), step a = np.arange(0, 10, 2) print(a) # Output: [0 2 4 6 8] # arange with float step b = np.arange(0, 1, 0.2) print(b) # Output: [0. 0.2 0.4 0.6 0.8] # linspace: start, stop (inclusive), number of points c = np.linspace(0, 1, 5) print(c) # Output: [0. 0.25 0.5 0.75 1. ] # linspace is ideal for generating x-values for plots x = np.linspace(0, 2 * np.pi, 100) # 100 points from 0 to 2π

Random Arrays — np.random

NumPy’s random module is essential for simulations, testing, and machine learning initialization:

# Uniform random values between 0 and 1 rand_uniform = np.random.rand(3, 3) print(rand_uniform) # Output: 3x3 matrix of random floats in [0, 1) # Standard normal distribution (mean=0, std=1) rand_normal = np.random.randn(3, 3) print(rand_normal) # Output: 3x3 matrix of values from normal distribution # Random integers rand_int = np.random.randint(1, 100, size=(2, 4)) print(rand_int) # Output: 2x4 matrix of random ints between 1 and 99 # Reproducible random numbers with seed np.random.seed(42) reproducible = np.random.rand(3) print(reproducible) # Output: [0.37454012 0.95071431 0.73199394] # Using the newer Generator API (recommended for new code) rng = np.random.default_rng(seed=42) values = rng.random(5) print(values) # Output: [0.77395605 0.43887844 0.85859792 0.69736803 0.09417735] # Random choice from an array choices = rng.choice([10, 20, 30, 40, 50], size=3, replace=False) print(choices) # Output: 3 random elements without replacement

Array Properties

Understanding array properties is essential for debugging and writing correct NumPy code. Every ndarray carries metadata about its structure:

import numpy as np

arr = np.array([[1, 2, 3, 4],

[5, 6, 7, 8],

[9, 10, 11, 12]])

# shape: dimensions as a tuple (rows, columns)

print(f"Shape: {arr.shape}")

# Output: Shape: (3, 4)

# ndim: number of dimensions (axes)

print(f"Dimensions: {arr.ndim}")

# Output: Dimensions: 2

# size: total number of elements

print(f"Total elements: {arr.size}")

# Output: Total elements: 12

# dtype: data type of elements

print(f"Data type: {arr.dtype}")

# Output: Data type: int64

# itemsize: size of each element in bytes

print(f"Bytes per element: {arr.itemsize}")

# Output: Bytes per element: 8

# nbytes: total memory consumed

print(f"Total bytes: {arr.nbytes}")

# Output: Total bytes: 96

# Practical example: understanding memory usage

large_arr = np.zeros((1000, 1000), dtype=np.float64)

print(f"Memory: {large_arr.nbytes / 1024 / 1024:.1f} MB")

# Output: Memory: 7.6 MB

# Same array with float32 uses half the memory

small_arr = np.zeros((1000, 1000), dtype=np.float32)

print(f"Memory: {small_arr.nbytes / 1024 / 1024:.1f} MB")

# Output: Memory: 3.8 MB

The dtype attribute is particularly important. NumPy supports many data types: int8, int16, int32, int64, float16, float32, float64, complex64, complex128, bool, and more. Choosing the right dtype can significantly impact both memory usage and computation speed.

Indexing and Slicing

NumPy’s indexing is more powerful than Python list indexing. Mastering it will save you from writing unnecessary loops.

1D Indexing and Slicing

arr = np.array([10, 20, 30, 40, 50, 60, 70, 80]) # Basic indexing (0-based) print(arr[0]) # 10 print(arr[-1]) # 80 print(arr[-2]) # 70 # Slicing: start:stop:step print(arr[2:5]) # [30 40 50] print(arr[:3]) # [10 20 30] print(arr[5:]) # [60 70 80] print(arr[::2]) # [10 30 50 70] — every other element print(arr[::-1]) # [80 70 60 50 40 30 20 10] — reversed

2D Indexing and Slicing

matrix = np.array([[1, 2, 3, 4],

[5, 6, 7, 8],

[9, 10, 11, 12],

[13, 14, 15, 16]])

# Single element: [row, col]

print(matrix[0, 0]) # 1

print(matrix[2, 3]) # 12

# Entire row

print(matrix[1]) # [5 6 7 8]

print(matrix[1, :]) # [5 6 7 8] — equivalent

# Entire column

print(matrix[:, 2]) # [ 3 7 11 15]

# Sub-matrix (rows 0-1, columns 1-2)

print(matrix[0:2, 1:3])

# Output:

# [[2 3]

# [6 7]]

# Every other row, every other column

print(matrix[::2, ::2])

# Output:

# [[ 1 3]

# [ 9 11]]

Boolean Indexing

Boolean indexing is one of NumPy’s most powerful features. You create a boolean mask and use it to filter elements:

arr = np.array([15, 22, 8, 41, 3, 67, 29, 55]) # Elements greater than 20 mask = arr > 20 print(mask) # Output: [False True False True False True True True] print(arr[mask]) # Output: [22 41 67 29 55] # Shorthand — most common pattern print(arr[arr > 20]) # Output: [22 41 67 29 55] # Combining conditions (use & for AND, | for OR, ~ for NOT) print(arr[(arr > 10) & (arr < 50)]) # Output: [15 22 41 29] print(arr[(arr < 10) | (arr > 50)]) # Output: [ 8 3 67 55] # Boolean indexing on 2D arrays matrix = np.array([[1, 2], [3, 4], [5, 6]]) print(matrix[matrix % 2 == 0]) # Output: [2 4 6] — returns a flat array of even numbers

Fancy Indexing

Fancy indexing lets you use arrays of indices to access multiple elements at once:

arr = np.array([10, 20, 30, 40, 50])

# Select elements at indices 0, 2, and 4

indices = np.array([0, 2, 4])

print(arr[indices])

# Output: [10 30 50]

# Works with 2D arrays too

matrix = np.array([[1, 2, 3],

[4, 5, 6],

[7, 8, 9],

[10, 11, 12]])

# Select specific rows

print(matrix[[0, 2, 3]])

# Output:

# [[ 1 2 3]

# [ 7 8 9]

# [10 11 12]]

# Select specific elements: (row0,col1), (row1,col2), (row2,col0)

rows = np.array([0, 1, 2])

cols = np.array([1, 2, 0])

print(matrix[rows, cols])

# Output: [2 6 7]

Array Operations

NumPy’s real power shows up in array operations. Everything is vectorized — no loops needed.

Element-wise Operations

a = np.array([1, 2, 3, 4]) b = np.array([10, 20, 30, 40]) # Arithmetic is element-wise print(a + b) # [11 22 33 44] print(a - b) # [ -9 -18 -27 -36] print(a * b) # [ 10 40 90 160] print(b / a) # [10. 10. 10. 10.] print(a ** 2) # [ 1 4 9 16] # Comparison operators return boolean arrays print(a > 2) # [False False True True] print(a == b) # [False False False False] # Scalar operations are broadcast to every element print(a + 100) # [101 102 103 104] print(a * 3) # [ 3 6 9 12]

Broadcasting

Broadcasting is the mechanism that lets NumPy perform operations on arrays of different shapes. It’s one of the most important concepts to understand:

# Broadcasting a scalar across an array

arr = np.array([[1, 2, 3],

[4, 5, 6]])

print(arr * 10)

# Output:

# [[10 20 30]

# [40 50 60]]

# Broadcasting a 1D array across rows of a 2D array

row = np.array([100, 200, 300])

print(arr + row)

# Output:

# [[101 202 303]

# [104 205 306]]

# Broadcasting a column vector across columns

col = np.array([[10],

[20]])

print(arr + col)

# Output:

# [[11 12 13]

# [24 25 26]]

# Practical example: centering data (subtracting column means)

data = np.array([[1.0, 200, 3000],

[2.0, 400, 6000],

[3.0, 600, 9000]])

col_means = data.mean(axis=0)

print(f"Column means: {col_means}")

# Output: Column means: [2.000e+00 4.000e+02 6.000e+03]

centered = data - col_means

print(centered)

# Output:

# [[-1.000e+00 -2.000e+02 -3.000e+03]

# [ 0.000e+00 0.000e+00 0.000e+00]

# [ 1.000e+00 2.000e+02 3.000e+03]]

Broadcasting rules:

- If arrays have different numbers of dimensions, the shape of the smaller array is padded with ones on the left.

- Arrays with a size of 1 along a particular dimension act as if they had the size of the array with the largest shape along that dimension.

- If sizes don’t match and neither is 1, broadcasting fails with a

ValueError.

Aggregation Functions

arr = np.array([[1, 2, 3],

[4, 5, 6],

[7, 8, 9]])

# Global aggregations

print(f"Sum: {arr.sum()}") # 45

print(f"Mean: {arr.mean()}") # 5.0

print(f"Min: {arr.min()}") # 1

print(f"Max: {arr.max()}") # 9

print(f"Std Dev: {arr.std():.4f}") # 2.5820

# Aggregation along axes

# axis=0 → collapse rows (compute across rows → one value per column)

# axis=1 → collapse columns (compute across columns → one value per row)

print(f"Column sums: {arr.sum(axis=0)}") # [12 15 18]

print(f"Row sums: {arr.sum(axis=1)}") # [ 6 15 24]

print(f"Column means: {arr.mean(axis=0)}") # [4. 5. 6.]

print(f"Row means: {arr.mean(axis=1)}") # [2. 5. 8.]

# Other useful aggregations

print(f"Cumulative sum: {np.array([1,2,3,4]).cumsum()}")

# Output: [ 1 3 6 10]

print(f"Product: {np.array([1,2,3,4]).prod()}")

# Output: 24

# argmin and argmax — index of min/max value

scores = np.array([82, 91, 76, 95, 88])

print(f"Best score index: {scores.argmax()}") # 3

print(f"Worst score index: {scores.argmin()}") # 2

Reshaping Arrays

Reshaping lets you change the dimensions of an array without changing its data. This is critical when preparing data for machine learning models or matrix operations.

reshape()

arr = np.arange(12) print(arr) # Output: [ 0 1 2 3 4 5 6 7 8 9 10 11] # Reshape to 3 rows × 4 columns reshaped = arr.reshape(3, 4) print(reshaped) # Output: # [[ 0 1 2 3] # [ 4 5 6 7] # [ 8 9 10 11]] # Reshape to 4 rows × 3 columns print(arr.reshape(4, 3)) # Output: # [[ 0 1 2] # [ 3 4 5] # [ 6 7 8] # [ 9 10 11]] # Use -1 to let NumPy infer one dimension print(arr.reshape(2, -1)) # 2 rows, auto-compute columns → (2, 6) print(arr.reshape(-1, 3)) # auto-compute rows, 3 columns → (4, 3) # Reshape to 3D print(arr.reshape(2, 2, 3).shape) # Output: (2, 2, 3) # IMPORTANT: total elements must match # arr.reshape(3, 5) # ValueError: cannot reshape array of size 12 into shape (3,5)

flatten() and ravel()

matrix = np.array([[1, 2, 3],

[4, 5, 6]])

# flatten() — always returns a copy

flat = matrix.flatten()

print(flat)

# Output: [1 2 3 4 5 6]

flat[0] = 999

print(matrix[0, 0]) # 1 — original unchanged (it's a copy)

# ravel() — returns a view when possible (more memory efficient)

raveled = matrix.ravel()

print(raveled)

# Output: [1 2 3 4 5 6]

raveled[0] = 999

print(matrix[0, 0]) # 999 — original IS changed (it's a view)

Transpose

matrix = np.array([[1, 2, 3],

[4, 5, 6]])

print(f"Original shape: {matrix.shape}")

# Output: Original shape: (2, 3)

transposed = matrix.T

print(f"Transposed shape: {transposed.shape}")

# Output: Transposed shape: (3, 2)

print(transposed)

# Output:

# [[1 4]

# [2 5]

# [3 6]]

# np.transpose() and .T are equivalent for 2D arrays

# For higher dimensions, np.transpose() lets you specify axis order

arr_3d = np.arange(24).reshape(2, 3, 4)

print(arr_3d.shape) # (2, 3, 4)

print(np.transpose(arr_3d, (1, 0, 2)).shape) # (3, 2, 4)

Stacking and Splitting

Combining and dividing arrays is a common operation when preparing datasets or assembling results.

Stacking Arrays

a = np.array([1, 2, 3]) b = np.array([4, 5, 6]) # Vertical stack — adds rows vs = np.vstack([a, b]) print(vs) # Output: # [[1 2 3] # [4 5 6]] # Horizontal stack — concatenates side by side hs = np.hstack([a, b]) print(hs) # Output: [1 2 3 4 5 6] # 2D stacking m1 = np.array([[1, 2], [3, 4]]) m2 = np.array([[5, 6], [7, 8]]) print(np.vstack([m1, m2])) # Output: # [[1 2] # [3 4] # [5 6] # [7 8]] print(np.hstack([m1, m2])) # Output: # [[1 2 5 6] # [3 4 7 8]] # np.concatenate — general purpose (specify axis) print(np.concatenate([m1, m2], axis=0)) # same as vstack print(np.concatenate([m1, m2], axis=1)) # same as hstack # Column stack — treats 1D arrays as columns c1 = np.array([1, 2, 3]) c2 = np.array([4, 5, 6]) print(np.column_stack([c1, c2])) # Output: # [[1 4] # [2 5] # [3 6]]

Splitting Arrays

arr = np.arange(16).reshape(4, 4)

print(arr)

# Output:

# [[ 0 1 2 3]

# [ 4 5 6 7]

# [ 8 9 10 11]

# [12 13 14 15]]

# Split into 2 equal parts along rows (axis=0)

top, bottom = np.vsplit(arr, 2)

print("Top:\n", top)

# Output:

# [[0 1 2 3]

# [4 5 6 7]]

print("Bottom:\n", bottom)

# Output:

# [[ 8 9 10 11]

# [12 13 14 15]]

# Split into 2 equal parts along columns (axis=1)

left, right = np.hsplit(arr, 2)

print("Left:\n", left)

# Output:

# [[ 0 1]

# [ 4 5]

# [ 8 9]

# [12 13]]

# Split at specific indices

first, second, third = np.split(arr, [1, 3], axis=0)

print(f"First (row 0): {first}")

print(f"Second (rows 1-2):\n{second}")

print(f"Third (row 3): {third}")

Mathematical Functions

NumPy provides a comprehensive set of mathematical functions — all vectorized and optimized.

Universal Functions (ufuncs)

arr = np.array([1, 4, 9, 16, 25]) # Square root print(np.sqrt(arr)) # Output: [1. 2. 3. 4. 5.] # Exponential (e^x) print(np.exp(np.array([0, 1, 2]))) # Output: [1. 2.71828183 7.3890561 ] # Natural logarithm print(np.log(np.array([1, np.e, np.e**2]))) # Output: [0. 1. 2.] # Log base 10 and base 2 print(np.log10(np.array([1, 10, 100, 1000]))) # Output: [0. 1. 2. 3.] print(np.log2(np.array([1, 2, 4, 8]))) # Output: [0. 1. 2. 3.] # Trigonometric functions angles = np.array([0, np.pi/6, np.pi/4, np.pi/3, np.pi/2]) print(np.sin(angles)) # Output: [0. 0.5 0.70710678 0.8660254 1. ] print(np.cos(angles)) # Output: [1.00000000e+00 8.66025404e-01 7.07106781e-01 5.00000000e-01 6.12323400e-17] # Absolute value print(np.abs(np.array([-3, -1, 0, 2, 5]))) # Output: [3 1 0 2 5] # Rounding vals = np.array([1.23, 2.67, 3.5, 4.89]) print(np.round(vals, 1)) # [1.2 2.7 3.5 4.9] print(np.floor(vals)) # [1. 2. 3. 4.] print(np.ceil(vals)) # [2. 3. 4. 5.]

Dot Product and Matrix Multiplication

# Dot product of 1D arrays (scalar result)

a = np.array([1, 2, 3])

b = np.array([4, 5, 6])

print(np.dot(a, b))

# Output: 32 (1*4 + 2*5 + 3*6)

# Matrix multiplication

A = np.array([[1, 2],

[3, 4]])

B = np.array([[5, 6],

[7, 8]])

# Three equivalent ways to multiply matrices

print(np.dot(A, B))

print(A @ B) # @ operator (Python 3.5+)

print(np.matmul(A, B))

# All output:

# [[19 22]

# [43 50]]

# IMPORTANT: * is element-wise, NOT matrix multiplication

print(A * B)

# Output:

# [[ 5 12]

# [21 32]]

# Cross product

print(np.cross(np.array([1, 0, 0]), np.array([0, 1, 0])))

# Output: [0 0 1]

Linear Algebra — np.linalg

A = np.array([[1, 2],

[3, 4]])

# Determinant

print(f"Determinant: {np.linalg.det(A):.1f}")

# Output: Determinant: -2.0

# Inverse

A_inv = np.linalg.inv(A)

print(f"Inverse:\n{A_inv}")

# Output:

# [[-2. 1. ]

# [ 1.5 -0.5]]

# Verify: A × A_inv = Identity

print(np.round(A @ A_inv))

# Output:

# [[1. 0.]

# [0. 1.]]

# Eigenvalues and eigenvectors

eigenvalues, eigenvectors = np.linalg.eig(A)

print(f"Eigenvalues: {eigenvalues}")

print(f"Eigenvectors:\n{eigenvectors}")

# Matrix rank

print(f"Rank: {np.linalg.matrix_rank(A)}")

# Output: Rank: 2

# Norm

print(f"Frobenius norm: {np.linalg.norm(A):.4f}")

# Output: Frobenius norm: 5.4772

Comparison: NumPy vs Python Lists

Understanding why NumPy is faster than Python lists is important for making good design decisions.

Speed Benchmark

import numpy as np

import time

size = 1_000_000

# Python list approach

py_list = list(range(size))

start = time.time()

py_result = [x ** 2 for x in py_list]

py_time = time.time() - start

print(f"Python list: {py_time:.4f} seconds")

# NumPy approach

np_arr = np.arange(size)

start = time.time()

np_result = np_arr ** 2

np_time = time.time() - start

print(f"NumPy array: {np_time:.4f} seconds")

print(f"NumPy is {py_time / np_time:.0f}x faster")

# Typical output:

# Python list: 0.1654 seconds

# NumPy array: 0.0012 seconds

# NumPy is 138x faster

Memory Efficiency

import sys

# Python list of 1000 integers

py_list = list(range(1000))

py_size = sys.getsizeof(py_list) + sum(sys.getsizeof(x) for x in py_list)

print(f"Python list: {py_size:,} bytes")

# NumPy array of 1000 integers

np_arr = np.arange(1000, dtype=np.int64)

print(f"NumPy array: {np_arr.nbytes:,} bytes")

print(f"Python list uses {py_size / np_arr.nbytes:.1f}x more memory")

# Typical output:

# Python list: 36,056 bytes

# NumPy array: 8,000 bytes

# Python list uses 4.5x more memory

Why is NumPy faster?

- Contiguous memory — NumPy arrays are stored as continuous blocks of memory. Python lists store pointers to scattered objects.

- Fixed type — All elements have the same type, so no type-checking per element during operations.

- C-level loops — Operations loop in compiled C code, not interpreted Python.

- SIMD optimization — NumPy can use CPU vector instructions (SSE, AVX) to process multiple elements per clock cycle.

Practical Examples

Example 1: Image as a NumPy Array (Grayscale Manipulation)

Digital images are just NumPy arrays. A grayscale image is a 2D array; a color image is 3D (height × width × channels).

import numpy as np

# Simulate a small 5x5 grayscale image (values 0-255)

image = np.array([

[50, 80, 120, 160, 200],

[55, 85, 125, 165, 205],

[60, 90, 130, 170, 210],

[65, 95, 135, 175, 215],

[70, 100, 140, 180, 220]

], dtype=np.uint8)

print(f"Image shape: {image.shape}")

print(f"Pixel value range: {image.min()} - {image.max()}")

# Invert the image (negative)

inverted = 255 - image

print(f"Inverted:\n{inverted}")

# Increase brightness (clamp to 255)

brightened = np.clip(image.astype(np.int16) + 50, 0, 255).astype(np.uint8)

print(f"Brightened:\n{brightened}")

# Threshold to binary (black/white)

threshold = 128

binary = (image > threshold).astype(np.uint8) * 255

print(f"Binary:\n{binary}")

# Normalize to [0, 1] range (common preprocessing step)

normalized = image.astype(np.float32) / 255.0

print(f"Normalized range: {normalized.min():.2f} - {normalized.max():.2f}")

# Simulate RGB image processing

rgb_image = np.random.randint(0, 256, size=(100, 100, 3), dtype=np.uint8)

print(f"RGB shape: {rgb_image.shape}") # (100, 100, 3)

# Convert to grayscale using weighted average

weights = np.array([0.2989, 0.5870, 0.1140]) # Standard luminance weights

grayscale = np.dot(rgb_image[...,:3], weights).astype(np.uint8)

print(f"Grayscale shape: {grayscale.shape}") # (100, 100)

Example 2: Statistical Analysis of a Dataset

import numpy as np

# Simulate exam scores for 5 subjects, 100 students

np.random.seed(42)

scores = np.random.normal(loc=72, scale=12, size=(100, 5))

scores = np.clip(scores, 0, 100).round(1)

subjects = ['Math', 'Science', 'English', 'History', 'Art']

print("=== Class Statistics ===\n")

# Per-subject statistics

for i, subject in enumerate(subjects):

col = scores[:, i]

print(f"{subject:>10}: mean={col.mean():.1f}, "

f"std={col.std():.1f}, "

f"min={col.min():.1f}, "

f"max={col.max():.1f}, "

f"median={np.median(col):.1f}")

print(f"\n{'Overall':>10}: mean={scores.mean():.1f}, std={scores.std():.1f}")

# Find top 5 students by average score

student_averages = scores.mean(axis=1)

top_5_indices = np.argsort(student_averages)[-5:][::-1]

print(f"\nTop 5 students (by index): {top_5_indices}")

for idx in top_5_indices:

print(f" Student {idx}: avg = {student_averages[idx]:.1f}")

# Correlation between subjects

correlation = np.corrcoef(scores.T)

print(f"\nCorrelation matrix shape: {correlation.shape}")

print(f"Math-Science correlation: {correlation[0, 1]:.3f}")

# Percentile analysis

print(f"\n90th percentile per subject:")

for i, subject in enumerate(subjects):

p90 = np.percentile(scores[:, i], 90)

print(f" {subject}: {p90:.1f}")

# Students scoring above 90 in all subjects

high_achievers = np.all(scores > 90, axis=1)

print(f"\nStudents scoring >90 in ALL subjects: {high_achievers.sum()}")

Example 3: Linear Algebra — Solving a System of Equations

Solving systems of linear equations is a fundamental operation in engineering and data science. Consider:

import numpy as np

# Solve the system:

# 2x + 3y - z = 1

# 4x + y + 2z = 2

# -2x + 7y - 3z = -1

# Coefficient matrix

A = np.array([[2, 3, -1],

[4, 1, 2],

[-2, 7, -3]])

# Constants vector

b = np.array([1, 2, -1])

# Solve using np.linalg.solve (faster and more stable than computing inverse)

x = np.linalg.solve(A, b)

print(f"Solution: x={x[0]:.4f}, y={x[1]:.4f}, z={x[2]:.4f}")

# Verify the solution

residual = A @ x - b

print(f"Residual (should be ~0): {residual}")

print(f"Max error: {np.abs(residual).max():.2e}")

# Least squares solution for overdetermined systems

# (more equations than unknowns — common in data fitting)

# Fit y = mx + c to noisy data

np.random.seed(42)

x_data = np.linspace(0, 10, 50)

y_data = 2.5 * x_data + 1.3 + np.random.normal(0, 1, 50)

# Set up matrix A for y = mx + c

A_fit = np.column_stack([x_data, np.ones(len(x_data))])

# Solve via least squares

result, residuals, rank, sv = np.linalg.lstsq(A_fit, y_data, rcond=None)

m, c = result

print(f"\nLeast squares fit: y = {m:.4f}x + {c:.4f}")

print(f"(True values: y = 2.5000x + 1.3000)")

Example 4: Data Normalization and Standardization

Normalization and standardization are essential preprocessing steps in machine learning. NumPy makes them trivial:

import numpy as np

# Sample dataset: 5 samples with 3 features of different scales

data = np.array([

[25.0, 50000, 3.5],

[30.0, 60000, 4.2],

[22.0, 45000, 3.1],

[35.0, 80000, 4.8],

[28.0, 55000, 3.9]

])

feature_names = ['Age', 'Salary', 'GPA']

print("Original data:")

print(data)

# Min-Max Normalization: scale to [0, 1]

min_vals = data.min(axis=0)

max_vals = data.max(axis=0)

normalized = (data - min_vals) / (max_vals - min_vals)

print(f"\nMin-Max Normalized (range [0, 1]):")

for i, name in enumerate(feature_names):

print(f" {name}: min={normalized[:, i].min():.2f}, max={normalized[:, i].max():.2f}")

print(normalized)

# Z-Score Standardization: mean=0, std=1

mean_vals = data.mean(axis=0)

std_vals = data.std(axis=0)

standardized = (data - mean_vals) / std_vals

print(f"\nZ-Score Standardized (mean≈0, std≈1):")

for i, name in enumerate(feature_names):

print(f" {name}: mean={standardized[:, i].mean():.4f}, std={standardized[:, i].std():.4f}")

print(standardized)

# Robust scaling (using median and IQR — resistant to outliers)

median_vals = np.median(data, axis=0)

q75 = np.percentile(data, 75, axis=0)

q25 = np.percentile(data, 25, axis=0)

iqr = q75 - q25

robust_scaled = (data - median_vals) / iqr

print(f"\nRobust Scaled (using median and IQR):")

print(robust_scaled)

Common Pitfalls

Even experienced developers trip over these. Save yourself the debugging time.

Pitfall 1: View vs Copy

This is the single most common source of bugs in NumPy code:

import numpy as np original = np.array([1, 2, 3, 4, 5]) # Slicing creates a VIEW, not a copy view = original[1:4] view[0] = 999 print(original) # Output: [ 1 999 3 4 5] — original is modified! # To create an independent copy, use .copy() original = np.array([1, 2, 3, 4, 5]) safe_copy = original[1:4].copy() safe_copy[0] = 999 print(original) # Output: [1 2 3 4 5] — original is safe # How to check: use np.shares_memory() a = np.array([1, 2, 3, 4, 5]) b = a[1:4] c = a[1:4].copy() print(np.shares_memory(a, b)) # True — b is a view print(np.shares_memory(a, c)) # False — c is a copy # Boolean and fancy indexing ALWAYS return copies d = a[a > 2] print(np.shares_memory(a, d)) # False

Pitfall 2: Broadcasting Shape Confusion

import numpy as np

a = np.array([[1, 2, 3],

[4, 5, 6]]) # shape (2, 3)

# This works — (3,) broadcasts to (2, 3)

row = np.array([10, 20, 30])

print(a + row)

# This FAILS — shapes (2, 3) and (2,) are incompatible

col_wrong = np.array([10, 20])

try:

print(a + col_wrong)

except ValueError as e:

print(f"Error: {e}")

# Error: operands could not be broadcast together with shapes (2,3) (2,)

# Fix: reshape to column vector (2, 1)

col_right = np.array([[10], [20]]) # shape (2, 1)

print(a + col_right)

# Output:

# [[11 12 13]

# [24 25 26]]

# Alternatively, use np.newaxis (or None — they're the same)

col_also_right = np.array([10, 20])[:, np.newaxis]

print(col_also_right.shape) # (2, 1)

print(a + col_also_right) # same result

Pitfall 3: Integer Overflow with Wrong dtype

import numpy as np # int8 can only hold values from -128 to 127 arr = np.array([100, 120, 130], dtype=np.int8) print(arr) # Output: [100 120 -126] — 130 overflowed silently! result = arr + np.int8(50) print(result) # Output: [-106 -86 -76] — completely wrong, no warning! # Fix: use a larger dtype arr_safe = np.array([100, 120, 130], dtype=np.int32) result_safe = arr_safe + 50 print(result_safe) # Output: [150 170 180] — correct # Watch out with uint8 (common for image data, range 0-255) img_pixel = np.array([250], dtype=np.uint8) print(img_pixel + np.uint8(10)) # Output: [4] — wrapped around! (250 + 10 = 260 → 260 % 256 = 4) # Fix: cast before arithmetic print(img_pixel.astype(np.int16) + 10) # Output: [260] — correct

Pitfall 4: Chained Indexing (Setting Values)

import numpy as np

arr = np.array([[1, 2, 3],

[4, 5, 6]])

# DON'T: Chained indexing may not work for setting values

# arr[arr > 3][0] = 99 # This might NOT modify arr

# DO: Use direct indexing

arr[arr > 3] = 99

print(arr)

# Output:

# [[ 1 2 3]

# [99 99 99]]

# Or use np.where for conditional replacement

arr2 = np.array([[1, 2, 3],

[4, 5, 6]])

result = np.where(arr2 > 3, 99, arr2)

print(result)

# Output:

# [[ 1 2 3]

# [99 99 99]]

Best Practices

Follow these guidelines to write efficient, maintainable NumPy code.

1. Vectorize Instead of Looping

import numpy as np

data = np.random.rand(1_000_000)

# BAD: Python loop

result_slow = np.empty(len(data))

for i in range(len(data)):

result_slow[i] = data[i] ** 2 + 2 * data[i] + 1

# GOOD: Vectorized operation (10-100x faster)

result_fast = data ** 2 + 2 * data + 1

# For custom functions, use np.vectorize (still not as fast as native ufuncs)

def custom_func(x):

if x > 0.5:

return x ** 2

else:

return 0

vectorized_func = np.vectorize(custom_func)

result = vectorized_func(data)

# BEST: Use np.where instead of vectorize

result_best = np.where(data > 0.5, data ** 2, 0)

2. Choose the Right dtype

import numpy as np # Use the smallest dtype that fits your data # Integers small_ints = np.array([1, 2, 3, 4], dtype=np.int8) # -128 to 127 medium_ints = np.array([1, 2, 3, 4], dtype=np.int32) # -2B to 2B big_ints = np.array([1, 2, 3, 4], dtype=np.int64) # default, but 2x memory # Floats — float32 is usually sufficient for ML weights = np.random.randn(1000, 1000).astype(np.float32) # 3.8 MB # vs np.float64 which would be 7.6 MB # Boolean arrays for masks mask = np.zeros(1000, dtype=np.bool_) # 1 byte per element vs 8 for int64

3. Use Broadcasting Instead of Tiling

import numpy as np data = np.random.rand(1000, 3) means = data.mean(axis=0) # shape (3,) # BAD: manually tiling to match shapes means_tiled = np.tile(means, (1000, 1)) # creates unnecessary copy centered_slow = data - means_tiled # GOOD: let broadcasting handle it (no extra memory) centered_fast = data - means # (1000, 3) - (3,) → broadcasting

4. Preallocate Instead of Growing

import numpy as np

n = 10000

# BAD: growing an array with append (copies entire array each time)

result = np.array([])

for i in range(n):

result = np.append(result, i ** 2)

# GOOD: preallocate and fill

result = np.empty(n)

for i in range(n):

result[i] = i ** 2

# BEST: vectorize completely

result = np.arange(n) ** 2

5. Use In-Place Operations When Possible

import numpy as np arr = np.random.rand(1_000_000) # Creates a new array (uses extra memory) arr = arr * 2 # In-place operation (modifies existing array, saves memory) arr *= 2 # NumPy also provides in-place functions np.multiply(arr, 2, out=arr) np.add(arr, 1, out=arr)

Key Takeaways

- NumPy arrays vs Python lists — NumPy arrays are faster (10-100x), more memory efficient, and support vectorized operations. Always prefer NumPy when working with numerical data.

- Avoid Python loops — Think in terms of array operations, not element-by-element processing. Vectorized code is both faster and more readable.

- Understand broadcasting — It’s the key to writing concise, efficient code without manually reshaping arrays.

- Views vs copies — Know that slicing creates views (shared memory) while boolean/fancy indexing creates copies. Use

.copy()when you need independence. - Choose the right dtype — Using

float32instead offloat64halves memory usage. Watch out for integer overflow with small dtypes likeint8anduint8. - Master indexing — Boolean indexing and fancy indexing eliminate the need for most filtering loops. They’re the bread and butter of data manipulation.

- Use np.linalg for linear algebra —

np.linalg.solve()is faster and more numerically stable than computing matrix inverses manually. - Preallocate arrays — Never grow arrays with

np.append()in a loop. Preallocate withnp.empty()ornp.zeros(), or better yet, vectorize the computation entirely. - NumPy is the foundation — Understanding NumPy deeply will make you more effective with pandas, scikit-learn, TensorFlow, PyTorch, and virtually every other data library in Python.

NumPy is one of those libraries where the investment in learning it well pays dividends across your entire Python career. The patterns and concepts here — vectorization, broadcasting, memory-aware programming — are transferable to GPU computing, distributed computing, and any high-performance numerical work.

Python Advanced – Serialization

Introduction

Serialization is the process of converting an in-memory data structure (objects, dictionaries, lists) into a format that can be stored on disk, transmitted over a network, or cached for later retrieval. Deserialization is the reverse — reconstructing the original data structure from the serialized format.

If you have ever saved application state to a file, sent JSON to a REST API, or read a YAML configuration file, you have already been using serialization. It is one of the most fundamental operations in software engineering, and Python gives you several powerful modules to handle it.

Why serialization matters:

- Data persistence — Save program state between sessions (e.g., user preferences, application data)

- API communication — Exchange structured data between services over HTTP (JSON is the lingua franca of modern APIs)

- Caching — Store expensive computation results and reload them instantly

- Inter-process communication — Share data between different programs, languages, or machines

- Configuration management — Store and load application settings in human-readable formats

In this tutorial, we will cover the most important serialization formats and libraries in Python: JSON, pickle, YAML, XML, dataclasses, and marshmallow. Each has its strengths, trade-offs, and ideal use cases.

1. JSON Serialization

JSON (JavaScript Object Notation) is the most widely used serialization format on the web. It is human-readable, language-agnostic, and supported by virtually every programming language. Python’s built-in json module handles JSON serialization and deserialization out of the box.

1.1 — json.dumps() and json.loads() (Working with Strings)

Use json.dumps() to serialize a Python object to a JSON string, and json.loads() to deserialize a JSON string back to a Python object.

import json

# Serialize Python dict to JSON string

user = {

"name": "Folau",

"age": 30,

"email": "folau@example.com",

"skills": ["Python", "Java", "AWS"],

"active": True

}

json_string = json.dumps(user)

print(json_string)

# {"name": "Folau", "age": 30, "email": "folau@example.com", "skills": ["Python", "Java", "AWS"], "active": true}

print(type(json_string))

# <class 'str'>

# Deserialize JSON string back to Python dict

parsed = json.loads(json_string)

print(parsed["name"]) # Folau

print(parsed["skills"]) # ['Python', 'Java', 'AWS']

print(type(parsed)) # <class 'dict'>

Notice that Python’s True becomes JSON’s true, and None becomes null. The json module handles these conversions automatically.

1.2 — json.dump() and json.load() (Working with Files)

When you need to write JSON directly to a file or read from one, use json.dump() and json.load() (without the trailing “s”).

import json

user = {

"name": "Folau",

"age": 30,

"roles": ["admin", "developer"]

}

# Write to file

with open("user.json", "w") as f:

json.dump(user, f, indent=2)

# Read from file

with open("user.json", "r") as f:

loaded_user = json.load(f)

print(loaded_user)

# {'name': 'Folau', 'age': 30, 'roles': ['admin', 'developer']}

Tip: Always use with statements for file operations. It guarantees the file is properly closed even if an exception occurs.

1.3 — Pretty Printing, sort_keys, and indent

The json.dumps() function accepts several formatting options that make output more readable.

import json

config = {

"database": {

"host": "localhost",

"port": 5432,

"name": "myapp_db"

},

"cache": {

"enabled": True,

"ttl_seconds": 300

},

"debug": False

}

# Pretty print with 4-space indentation

pretty = json.dumps(config, indent=4)

print(pretty)

# Sort keys alphabetically

sorted_json = json.dumps(config, indent=2, sort_keys=True)

print(sorted_json)

# Compact output (minimize whitespace)

compact = json.dumps(config, separators=(",", ":"))

print(compact)

# {"database":{"host":"localhost","port":5432,"name":"myapp_db"},"cache":{"enabled":true,"ttl_seconds":300},"debug":false}

Use indent for config files and logs where readability matters. Use separators=(",", ":") when you need minimal payload size (e.g., sending data over a network).

1.4 — Handling Non-Serializable Types

The json module can only serialize basic Python types: dict, list, str, int, float, bool, and None. Anything else will raise a TypeError. This commonly happens with datetime objects, sets, custom classes, and bytes.

import json

from datetime import datetime

data = {

"event": "deployment",

"timestamp": datetime.now()

}

# This will FAIL

try:

json.dumps(data)

except TypeError as e:

print(f"Error: {e}")

# Error: Object of type datetime is not JSON serializable

The simplest fix is the default parameter, which provides a fallback serializer for unsupported types.

import json

from datetime import datetime, date

from decimal import Decimal

def json_serializer(obj):

"""Custom serializer for objects not handled by default json encoder."""

if isinstance(obj, (datetime, date)):

return obj.isoformat()

if isinstance(obj, Decimal):

return float(obj)

if isinstance(obj, set):

return list(obj)

if isinstance(obj, bytes):

return obj.decode("utf-8")

raise TypeError(f"Type {type(obj)} is not JSON serializable")

data = {

"event": "deployment",

"timestamp": datetime.now(),

"cost": Decimal("49.99"),

"tags": {"urgent", "production"},

"payload": b"raw bytes here"

}

result = json.dumps(data, default=json_serializer, indent=2)

print(result)

1.5 — Custom JSONEncoder

For more control, subclass json.JSONEncoder. This is cleaner when you have complex serialization logic that you want to reuse across your application.

import json

from datetime import datetime, date

from decimal import Decimal

class AppJSONEncoder(json.JSONEncoder):

"""Custom JSON encoder for application-specific types."""

def default(self, obj):

if isinstance(obj, (datetime, date)):

return obj.isoformat()

if isinstance(obj, Decimal):

return str(obj)

if isinstance(obj, set):

return sorted(list(obj))

if isinstance(obj, bytes):

return obj.decode("utf-8")

# Let the base class raise TypeError for unknown types

return super().default(obj)

data = {

"user": "Folau",

"created_at": datetime(2024, 1, 15, 10, 30, 0),

"balance": Decimal("1250.75"),

"permissions": {"read", "write", "admin"}

}

print(json.dumps(data, cls=AppJSONEncoder, indent=2))

When to use which approach:

defaultparameter — Quick one-off serializationJSONEncodersubclass — Reusable across your codebase, better for large projects

2. pickle Module — Binary Serialization

While JSON handles basic data types, Python’s pickle module can serialize almost any Python object — including classes, functions, nested structures, and even lambda expressions. The trade-off is that pickle output is binary (not human-readable) and Python-specific (other languages cannot read it).

2.1 — pickle.dumps()/loads() and dump()/load()

import pickle

# A complex Python object that JSON cannot handle

class User:

def __init__(self, name, age, scores):

self.name = name

self.age = age

self.scores = scores

def average_score(self):

return sum(self.scores) / len(self.scores)

def __repr__(self):

return f"User(name={self.name}, age={self.age})"

user = User("Folau", 30, [95, 88, 72, 90])

# Serialize to bytes

pickled = pickle.dumps(user)

print(type(pickled)) # <class 'bytes'>

print(len(pickled)) # varies

# Deserialize back to object

restored = pickle.loads(pickled)

print(restored) # User(name=Folau, age=30)

print(restored.average_score()) # 86.25

import pickle

user = User("Folau", 30, [95, 88, 72, 90])

# Write to file (binary mode!)

with open("user.pkl", "wb") as f:

pickle.dump(user, f)

# Read from file

with open("user.pkl", "rb") as f:

loaded_user = pickle.load(f)

print(loaded_user.name) # Folau

print(loaded_user.average_score()) # 86.25

Important: Always open pickle files in binary mode ("wb" and "rb"). Pickle produces bytes, not text.

2.2 — pickle vs JSON: When to Use Each

| Feature | JSON | pickle |

|---|---|---|

| Human-readable | Yes | No (binary) |

| Language support | Universal | Python only |

| Custom objects | Requires custom encoder | Works out of the box |

| Security | Safe to deserialize | Can execute arbitrary code |

| Speed | Moderate | Fast for Python objects |

| Best for | APIs, config files, data exchange | Caching, internal Python storage |

2.3 — Security Warning

WARNING: Never unpickle data from untrusted sources! Pickle can execute arbitrary code during deserialization. A malicious pickle payload can run system commands, delete files, or open network connections. Only use pickle with data you created yourself or from a fully trusted source.

import pickle

import os

# This is what a MALICIOUS pickle payload looks like.

# DO NOT run this — it demonstrates the danger.

class Malicious:

def __reduce__(self):

# This would execute a system command when unpickled!

return (os.system, ("echo 'You have been hacked!'",))

# If someone sends you a pickle file, it could contain code like this.

# NEVER do: pickle.loads(untrusted_data)

# SAFE alternatives for untrusted data:

# - Use json.loads() for JSON data

# - Use yaml.safe_load() for YAML data

# - Use pickle only for data YOU created

3. YAML Serialization with PyYAML

YAML (YAML Ain’t Markup Language) is popular for configuration files because it is more human-friendly than JSON — no braces, no quotes around keys, and it supports comments. Python uses the PyYAML library to work with YAML.

# Install first: pip install pyyaml

import yaml

# Python dict to YAML string

config = {

"database": {

"host": "localhost",

"port": 5432,

"name": "myapp_db",

"credentials": {

"username": "admin",

"password": "secret"

}

},

"logging": {

"level": "INFO",

"file": "/var/log/app.log"

},

"features": ["auth", "caching", "rate_limiting"]

}

yaml_string = yaml.dump(config, default_flow_style=False, sort_keys=False)

print(yaml_string)

Output:

database:

host: localhost

port: 5432

name: myapp_db

credentials:

username: admin

password: secret

logging:

level: INFO

file: /var/log/app.log

features:

- auth

- caching

- rate_limiting

3.1 — Reading YAML Files (Always Use safe_load)

import yaml yaml_content = """ server: host: 0.0.0.0 port: 8080 workers: 4 database: url: postgresql://localhost:5432/myapp pool_size: 10 # Timeout in seconds timeout: 30 features: - authentication - rate_limiting - caching """ # ALWAYS use safe_load, never yaml.load() without a Loader config = yaml.safe_load(yaml_content) print(config["server"]["port"]) # 8080 print(config["database"]["url"]) # postgresql://localhost:5432/myapp print(config["features"]) # ['authentication', 'rate_limiting', 'caching']

3.2 — Config File Use Case

import yaml

import os

def load_config(config_path="config.yaml"):

"""Load application configuration from YAML file."""

if not os.path.exists(config_path):

raise FileNotFoundError(f"Config file not found: {config_path}")

with open(config_path, "r") as f:

config = yaml.safe_load(f)

# Override with environment variables if set

if os.environ.get("DB_HOST"):

config["database"]["host"] = os.environ["DB_HOST"]

if os.environ.get("DB_PASSWORD"):

config["database"]["password"] = os.environ["DB_PASSWORD"]

return config

def save_config(config, config_path="config.yaml"):

"""Save configuration back to YAML file."""

with open(config_path, "w") as f:

yaml.dump(config, f, default_flow_style=False, sort_keys=False)

# Usage

# config = load_config("config.yaml")

# print(config["database"]["host"])

Why YAML over JSON for config? YAML supports comments, is easier to read and edit by hand, and does not require quotes around string keys. JSON is better for data interchange because it is stricter and more widely supported programmatically.

4. XML Basics with ElementTree

XML (eXtensible Markup Language) is less common for new projects but still widely used in enterprise systems, SOAP APIs, and legacy codebases. Python’s standard library includes xml.etree.ElementTree for working with XML.

import xml.etree.ElementTree as ET

# Create XML programmatically

root = ET.Element("users")

user1 = ET.SubElement(root, "user", id="1")

ET.SubElement(user1, "name").text = "Folau"

ET.SubElement(user1, "email").text = "folau@example.com"

ET.SubElement(user1, "role").text = "admin"

user2 = ET.SubElement(root, "user", id="2")

ET.SubElement(user2, "name").text = "Jane"

ET.SubElement(user2, "email").text = "jane@example.com"

ET.SubElement(user2, "role").text = "developer"

# Convert to string

xml_string = ET.tostring(root, encoding="unicode", xml_declaration=True)

print(xml_string)

import xml.etree.ElementTree as ET

# Parse XML string

xml_data = """

<users>

<user id="1">

<name>Folau</name>

<email>folau@example.com</email>

<role>admin</role>

</user>

<user id="2">

<name>Jane</name>

<email>jane@example.com</email>

<role>developer</role>

</user>

</users>

"""

root = ET.fromstring(xml_data)

for user in root.findall("user"):

user_id = user.get("id")

name = user.find("name").text

email = user.find("email").text

role = user.find("role").text

print(f"ID: {user_id}, Name: {name}, Email: {email}, Role: {role}")

# Output:

# ID: 1, Name: Folau, Email: folau@example.com, Role: admin

# ID: 2, Name: Jane, Email: jane@example.com, Role: developer

When to use XML: SOAP web services, configuration files for Java-based systems (Maven pom.xml, Android manifests), RSS/Atom feeds, and legacy integrations. For new Python projects, JSON or YAML are almost always better choices.

5. dataclasses and Serialization

Python’s dataclasses module (introduced in Python 3.7) provides a clean way to define data-holding classes. Combined with the dataclasses.asdict() function, they integrate well with JSON serialization.

import json

from dataclasses import dataclass, asdict, field

from typing import List

@dataclass

class Address:

street: str

city: str

state: str

zip_code: str

@dataclass

class Employee:

name: str

age: int

department: str

skills: List[str] = field(default_factory=list)

address: Address = None

def to_json(self):

"""Serialize to JSON string."""

return json.dumps(asdict(self), indent=2)

@classmethod

def from_json(cls, json_string):

"""Deserialize from JSON string."""

data = json.loads(json_string)

# Handle nested Address object

if data.get("address"):

data["address"] = Address(**data["address"])

return cls(**data)

# Create and serialize

employee = Employee(

name="Folau",

age=30,

department="Engineering",

skills=["Python", "AWS", "Docker"],

address=Address("123 Main St", "San Francisco", "CA", "94102")

)

json_output = employee.to_json()

print(json_output)

# Deserialize back

restored = Employee.from_json(json_output)

print(restored.name) # Folau

print(restored.address.city) # San Francisco

print(restored.skills) # ['Python', 'AWS', 'Docker']

Why dataclasses for serialization?

- Type hints serve as documentation for your data structure

asdict()provides automatic conversion to a dictionary (ready forjson.dumps())- Default values, field factories, and frozen instances are built in

- No external dependencies required

6. marshmallow — Schema-Based Serialization

For production applications that need validation, type coercion, and well-defined schemas, the marshmallow library is the gold standard. It separates your data model from your serialization logic, which keeps things clean as your application grows.

# Install first: pip install marshmallow

from marshmallow import Schema, fields, validate, post_load

class User:

def __init__(self, name, email, age, role="viewer"):

self.name = name

self.email = email

self.age = age

self.role = role

def __repr__(self):

return f"User(name={self.name}, email={self.email}, role={self.role})"

class UserSchema(Schema):

name = fields.Str(required=True, validate=validate.Length(min=1, max=100))

email = fields.Email(required=True)

age = fields.Int(required=True, validate=validate.Range(min=0, max=150))

role = fields.Str(validate=validate.OneOf(["admin", "editor", "viewer"]))

@post_load

def make_user(self, data, **kwargs):

return User(**data)

schema = UserSchema()

# Deserialize (load) — validates and creates object

user_data = {"name": "Folau", "email": "folau@example.com", "age": 30, "role": "admin"}

user = schema.load(user_data)

print(user) # User(name=Folau, email=folau@example.com, role=admin)

# Serialize (dump) — converts object to dict

output = schema.dump(user)

print(output) # {'name': 'Folau', 'email': 'folau@example.com', 'age': 30, 'role': 'admin'}

# Validation error example

try:

bad_data = {"name": "", "email": "not-an-email", "age": -5}

schema.load(bad_data)

except Exception as e:

print(f"Validation errors: {e}")

Key benefits of marshmallow:

- Validation — Enforce constraints on incoming data

- Type coercion — Automatically convert strings to integers, dates, etc.

- Nested schemas — Handle complex, nested data structures

- Partial loading — Allow updates with only some fields

- Custom fields — Define your own field types and validators

7. Practical Examples

7.1 — REST API Data Processing

This is one of the most common real-world serialization tasks: fetching data from a REST API, processing it, and serializing the results.

import json

import urllib.request

from dataclasses import dataclass, asdict

from typing import List, Optional

@dataclass

class Todo:

id: int

title: str

completed: bool

user_id: int

@classmethod

def from_api_response(cls, data: dict) -> "Todo":

"""Create Todo from API response dict."""

return cls(

id=data["id"],

title=data["title"],

completed=data["completed"],

user_id=data["userId"]

)

def fetch_todos(limit: int = 10) -> List[Todo]:

"""Fetch todos from JSONPlaceholder API."""

url = f"https://jsonplaceholder.typicode.com/todos?_limit={limit}"

with urllib.request.urlopen(url) as response:

data = json.loads(response.read().decode())

return [Todo.from_api_response(item) for item in data]

def save_todos(todos: List[Todo], filepath: str):

"""Serialize todos to JSON file."""

data = [asdict(todo) for todo in todos]

with open(filepath, "w") as f:

json.dump(data, f, indent=2)

print(f"Saved {len(todos)} todos to {filepath}")

def load_todos(filepath: str) -> List[Todo]:

"""Deserialize todos from JSON file."""

with open(filepath, "r") as f:

data = json.load(f)

return [Todo(**item) for item in data]

# Fetch from API, process, and save

todos = fetch_todos(limit=5)

completed = [t for t in todos if t.completed]

print(f"Completed: {len(completed)} / {len(todos)}")

save_todos(todos, "todos.json")

restored = load_todos("todos.json")

print(f"Loaded {len(restored)} todos from file")

7.2 — JSON-Based Config File Manager

import json

import os

from datetime import datetime

class ConfigManager:

"""Manage application configuration with JSON persistence."""

def __init__(self, config_path="app_config.json"):

self.config_path = config_path

self.config = self._load_or_create()

def _load_or_create(self):

"""Load existing config or create default."""

if os.path.exists(self.config_path):

with open(self.config_path, "r") as f:

return json.load(f)

return self._default_config()

def _default_config(self):

"""Return default configuration."""

return {

"app_name": "MyApp",

"version": "1.0.0",

"database": {

"host": "localhost",

"port": 5432,

"name": "myapp_db"

},

"logging": {

"level": "INFO",

"file": "app.log"

},

"last_modified": datetime.now().isoformat()

}

def get(self, key, default=None):

"""Get a config value using dot notation: 'database.host'."""

keys = key.split(".")

value = self.config

for k in keys:

if isinstance(value, dict) and k in value:

value = value[k]

else:

return default

return value

def set(self, key, value):

"""Set a config value using dot notation."""

keys = key.split(".")

config = self.config

for k in keys[:-1]:

config = config.setdefault(k, {})

config[keys[-1]] = value

self.config["last_modified"] = datetime.now().isoformat()

self._save()

def _save(self):

"""Persist config to disk."""

with open(self.config_path, "w") as f:

json.dump(self.config, f, indent=2)

# Usage

config = ConfigManager("app_config.json")

print(config.get("database.host")) # localhost

print(config.get("logging.level")) # INFO

config.set("database.host", "db.production.com")

config.set("logging.level", "WARNING")

print(config.get("database.host")) # db.production.com

7.3 — Data Export/Import System (CSV + JSON)

import json

import csv

import os

class DataExporter:

"""Export and import data between JSON and CSV formats."""

@staticmethod

def json_to_csv(json_path, csv_path):

"""Convert a JSON array of objects to CSV."""

with open(json_path, "r") as f:

data = json.load(f)

if not data:

print("No data to export")

return

# Use keys from first record as CSV headers

headers = list(data[0].keys())

with open(csv_path, "w", newline="") as f:

writer = csv.DictWriter(f, fieldnames=headers)

writer.writeheader()

writer.writerows(data)

print(f"Exported {len(data)} records to {csv_path}")

@staticmethod

def csv_to_json(csv_path, json_path):

"""Convert CSV to JSON array of objects."""

records = []

with open(csv_path, "r") as f:

reader = csv.DictReader(f)

for row in reader:

records.append(dict(row))

with open(json_path, "w") as f:

json.dump(records, f, indent=2)

print(f"Imported {len(records)} records to {json_path}")

@staticmethod

def export_summary(data, output_path):

"""Export a summary report as JSON."""

summary = {

"total_records": len(data),

"exported_at": __import__("datetime").datetime.now().isoformat(),

"sample": data[:3] if len(data) >= 3 else data

}

with open(output_path, "w") as f:

json.dump(summary, f, indent=2)

print(f"Summary saved to {output_path}")

# Example usage

employees = [

{"name": "Folau", "department": "Engineering", "salary": 95000},

{"name": "Jane", "department": "Marketing", "salary": 85000},

{"name": "Bob", "department": "Engineering", "salary": 90000},

]

# Save as JSON

with open("employees.json", "w") as f:

json.dump(employees, f, indent=2)

# Convert JSON to CSV

exporter = DataExporter()

exporter.json_to_csv("employees.json", "employees.csv")

exporter.csv_to_json("employees.csv", "employees_restored.json")

7.4 — Caching Expensive Computations with pickle

import pickle

import os

import time

import hashlib

from functools import wraps

def pickle_cache(cache_dir=".cache"):

"""Decorator that caches function results using pickle."""

def decorator(func):

@wraps(func)

def wrapper(*args, **kwargs):

# Create cache directory if needed

os.makedirs(cache_dir, exist_ok=True)

# Generate a unique cache key from function name and arguments

key_data = f"{func.__name__}:{args}:{sorted(kwargs.items())}"

cache_key = hashlib.md5(key_data.encode()).hexdigest()

cache_path = os.path.join(cache_dir, f"{cache_key}.pkl")

# Return cached result if available

if os.path.exists(cache_path):

print(f"Cache HIT for {func.__name__}")

with open(cache_path, "rb") as f:

return pickle.load(f)

# Compute and cache the result

print(f"Cache MISS for {func.__name__} — computing...")

result = func(*args, **kwargs)

with open(cache_path, "wb") as f:

pickle.dump(result, f)

return result

return wrapper

return decorator

@pickle_cache()

def expensive_computation(n):

"""Simulate a slow computation."""

time.sleep(2) # Pretend this takes a long time

return {i: i ** 3 for i in range(n)}

# First call: takes 2 seconds (cache MISS)

start = time.time()

result1 = expensive_computation(1000)

print(f"First call: {time.time() - start:.2f}s")

# Second call: instant (cache HIT)

start = time.time()

result2 = expensive_computation(1000)

print(f"Second call: {time.time() - start:.2f}s")

print(f"Results match: {result1 == result2}")

8. Common Pitfalls

8.1 — Security: pickle and Untrusted Data

This is the single most important pitfall. As demonstrated earlier, pickle.loads() can execute arbitrary code. Never use pickle to deserialize data from user input, external APIs, or any untrusted source. Use JSON instead.

8.2 — Encoding Issues

import json

# Problem: non-ASCII characters

data = {"city": "Sao Paulo", "greeting": "Hola, como estas?"}

# Default behavior escapes non-ASCII

print(json.dumps(data))

# {"city": "Sao Paulo", "greeting": "Hola, \u00bfcomo est\u00e1s?"}

# Fix: use ensure_ascii=False

print(json.dumps(data, ensure_ascii=False))

# {"city": "Sao Paulo", "greeting": "Hola, como estas?"}

# When writing to files, always specify encoding

with open("data.json", "w", encoding="utf-8") as f:

json.dump(data, f, ensure_ascii=False, indent=2)

8.3 — Circular References

import json

# This will raise ValueError: Circular reference detected

a = {}

b = {"ref": a}

a["ref"] = b

try:

json.dumps(a)

except ValueError as e:

print(f"Error: {e}") # Circular reference detected

# Solution: break circular references before serializing

# or use a custom encoder that tracks visited objects

8.4 — datetime Handling

import json

from datetime import datetime

# Problem: datetime is not JSON-serializable

event = {"name": "Deploy", "timestamp": datetime.now()}

# Solution 1: Convert to ISO format string

event["timestamp"] = event["timestamp"].isoformat()

print(json.dumps(event))

# Solution 2: Use the default parameter

def default_handler(obj):

if hasattr(obj, "isoformat"):

return obj.isoformat()

raise TypeError(f"Cannot serialize {type(obj)}")

event2 = {"name": "Deploy", "timestamp": datetime.now()}

print(json.dumps(event2, default=default_handler))

# Deserializing back to datetime

json_str = '{"name": "Deploy", "timestamp": "2024-01-15T10:30:00"}'

data = json.loads(json_str)

data["timestamp"] = datetime.fromisoformat(data["timestamp"])

print(type(data["timestamp"])) # <class 'datetime.datetime'>

8.5 — JSON Keys Must Be Strings

import json

# Python allows non-string keys in dicts

data = {1: "one", 2: "two", (3, 4): "tuple_key"}

# JSON only allows string keys — this converts int keys to strings

result = json.dumps({1: "one", 2: "two"})

print(result) # {"1": "one", "2": "two"}

parsed = json.loads(result)

print(parsed["1"]) # "one" — note the key is now a string!

# print(parsed[1]) # KeyError! The key is "1", not 1

# Tuple keys will raise TypeError

try:

json.dumps(data)

except TypeError as e:

print(f"Error: {e}")

9. Best Practices

After years of working with serialization in production systems, here are the practices that matter most:

- Use JSON for human-readable data exchange. It is the standard for APIs, configuration files that humans edit, and any data shared between different languages or systems.

- Use pickle only for Python-internal storage. Caching computation results, saving ML models, or storing session data between runs of the same Python application. Never expose pickle data to the outside world.

- Validate on deserialization. Never trust incoming data. Validate structure, types, and ranges after deserializing — whether from a file, API, or user input. Libraries like marshmallow and pydantic make this easy.

- Handle encoding explicitly. Always specify

encoding="utf-8"when opening files, and useensure_ascii=Falseif your data contains non-ASCII characters. - Use

yaml.safe_load(), neveryaml.load()without a Loader. The fullyaml.load()can execute arbitrary Python code, similar to pickle. - Define clear serialization boundaries. Use

to_dict()/from_dict()methods on your classes, or use schemas (marshmallow) to define exactly what gets serialized and how. - Version your serialized formats. Include a version field in your serialized data so you can handle format changes gracefully over time.

- Handle missing fields gracefully. When deserializing, use

.get()with defaults rather than direct key access. Data schemas evolve, and old serialized data may lack newer fields. - Keep serialization logic separate from business logic. Do not scatter

json.dumps()calls throughout your code. Centralize serialization in dedicated methods or schema classes. - Use appropriate formats for the job. YAML for config files that humans edit. JSON for API communication. pickle for Python-internal caching. CSV for tabular data that needs spreadsheet compatibility. XML only when integrating with systems that require it.

10. Key Takeaways

- Serialization converts Python objects to a storable/transmittable format; deserialization reverses the process.

- JSON (

jsonmodule) is the go-to format for APIs and human-readable data. Usedumps/loadsfor strings,dump/loadfor files. - pickle handles any Python object but produces binary, Python-only output. Never unpickle untrusted data.

- YAML (

PyYAML) excels at configuration files. Always usesafe_load(). - XML (

ElementTree) is for enterprise/legacy integrations. - dataclasses +

asdict()provide a clean, zero-dependency path from Python objects to JSON. - marshmallow adds validation and schema enforcement for production applications.

- Handle

datetime, encoding, and non-string keys explicitly — they are the most common sources of serialization bugs. - Always validate deserialized data. Never trust the source blindly.

Python Advanced – Map, Reduce, and Filter

Introduction

Functional programming is a paradigm that treats computation as the evaluation of mathematical functions. Rather than telling the computer how to do something step by step (imperative style), you describe what you want to achieve by composing pure functions that transform data without side effects.

Python is not a purely functional language, but it borrows heavily from the functional tradition. Three of the most important functional tools in Python are map(), filter(), and reduce(). These functions let you process collections of data in a declarative, composable way — and understanding them will make you a stronger Python developer.

Here is why these three functions matter:

- map() transforms every element in a collection.

- filter() selects elements that meet a condition.

- reduce() collapses a collection into a single value.

Together, they form the backbone of data processing pipelines. Whether you are cleaning datasets, transforming API responses, or building ETL jobs, you will reach for these tools constantly.

map()

Syntax

map(function, iterable, *iterables)

map() applies a function to every item in one or more iterables and returns a map object (an iterator). It does not modify the original data — it produces a new sequence of transformed values.

# Basic usage numbers = [1, 2, 3, 4, 5] squared = map(lambda x: x ** 2, numbers) print(list(squared)) # Output: [1, 4, 9, 16, 25]

Notice that map() returns an iterator, not a list. You need to wrap it in list() to see all the values at once. This lazy evaluation is by design — it is memory efficient for large datasets.

Example 1: Converting Temperatures (Celsius to Fahrenheit)

def celsius_to_fahrenheit(celsius):

return (celsius * 9/5) + 32

temperatures_c = [0, 20, 37, 100]

temperatures_f = list(map(celsius_to_fahrenheit, temperatures_c))

print(temperatures_f)

# Output: [32.0, 68.0, 98.6, 212.0]

This is clean, readable, and intention-revealing. The function name tells you exactly what transformation is happening. No loop boilerplate, no index management.

Example 2: Extracting Data from a List of Dictionaries

This is a pattern you will use all the time when working with API responses or database results.

employees = [

{"name": "Alice", "department": "Engineering", "salary": 95000},

{"name": "Bob", "department": "Marketing", "salary": 72000},

{"name": "Charlie", "department": "Engineering", "salary": 105000},

{"name": "Diana", "department": "HR", "salary": 68000},

]

# Extract just the names

names = list(map(lambda emp: emp["name"], employees))

print(names)

# Output: ['Alice', 'Bob', 'Charlie', 'Diana']

# Extract name and salary as tuples

name_salary = list(map(lambda emp: (emp["name"], emp["salary"]), employees))

print(name_salary)

# Output: [('Alice', 95000), ('Bob', 72000), ('Charlie', 105000), ('Diana', 68000)]

Example 3: Using map() with Multiple Iterables

When you pass multiple iterables to map(), the function must accept that many arguments. The iteration stops when the shortest iterable is exhausted.

# Add corresponding elements from two lists

list_a = [1, 2, 3, 4]

list_b = [10, 20, 30, 40]

sums = list(map(lambda a, b: a + b, list_a, list_b))

print(sums)

# Output: [11, 22, 33, 44]

# Calculate weighted scores

scores = [85, 92, 78, 95]

weights = [0.2, 0.3, 0.25, 0.25]

weighted = list(map(lambda s, w: round(s * w, 2), scores, weights))

print(weighted)

# Output: [17.0, 27.6, 19.5, 23.75]

total_weighted_score = sum(weighted)

print(f"Total weighted score: {total_weighted_score}")

# Output: Total weighted score: 87.85

map() vs List Comprehension

In Python, list comprehensions can do everything map() does and are often considered more Pythonic.

numbers = [1, 2, 3, 4, 5] # Using map squared_map = list(map(lambda x: x ** 2, numbers)) # Using list comprehension squared_comp = [x ** 2 for x in numbers] # Both produce: [1, 4, 9, 16, 25]

When to use map():

- When you already have a named function to apply —

list(map(str, numbers))is cleaner than[str(x) for x in numbers]. - When you need lazy evaluation (do not wrap in

list()). - When working with multiple iterables simultaneously.

When to use list comprehension:

- When the transformation logic is inline and simple.

- When you also need to filter (comprehensions combine map and filter naturally).

- When readability matters more than functional purity.

filter()

Syntax

filter(function, iterable)

filter() takes a function that returns True or False (a predicate) and an iterable. It returns an iterator containing only the elements for which the predicate returned True.

# Basic usage numbers = [1, 2, 3, 4, 5, 6, 7, 8, 9, 10] evens = list(filter(lambda x: x % 2 == 0, numbers)) print(evens) # Output: [2, 4, 6, 8, 10]

Example 1: Filtering Even and Odd Numbers

numbers = range(1, 21) # 1 through 20

evens = list(filter(lambda x: x % 2 == 0, numbers))

odds = list(filter(lambda x: x % 2 != 0, numbers))

print(f"Even: {evens}")

# Output: Even: [2, 4, 6, 8, 10, 12, 14, 16, 18, 20]

print(f"Odd: {odds}")

# Output: Odd: [1, 3, 5, 7, 9, 11, 13, 15, 17, 19]

Example 2: Filtering Valid Emails from a List

Here is a practical example you might encounter when processing user input or cleaning data.

import re

def is_valid_email(email):

"""Basic email validation."""

pattern = r'^[a-zA-Z0-9._%+-]+@[a-zA-Z0-9.-]+\.[a-zA-Z]{2,}$'

return bool(re.match(pattern, email))

emails = [

"alice@example.com",

"bob@company.org",

"not-an-email",

"charlie@",

"diana@domain.co.uk",

"@missing-local.com",

"eve@valid.io",

]

valid_emails = list(filter(is_valid_email, emails))

print(valid_emails)

# Output: ['alice@example.com', 'bob@company.org', 'diana@domain.co.uk', 'eve@valid.io']

invalid_emails = list(filter(lambda e: not is_valid_email(e), emails))

print(invalid_emails)

# Output: ['not-an-email', 'charlie@', '@missing-local.com']

Example 3: Filtering Objects by Attribute

class Product:

def __init__(self, name, price, in_stock):

self.name = name

self.price = price

self.in_stock = in_stock

def __repr__(self):

return f"Product({self.name}, ${self.price}, {'In Stock' if self.in_stock else 'Out of Stock'})"

products = [

Product("Laptop", 999.99, True),

Product("Mouse", 29.99, True),

Product("Keyboard", 79.99, False),

Product("Monitor", 349.99, True),

Product("Webcam", 69.99, False),

Product("Headset", 149.99, True),

]

# Filter products that are in stock and under $200

affordable_in_stock = list(filter(

lambda p: p.in_stock and p.price < 200,

products

))

print(affordable_in_stock)

# Output: [Product(Mouse, $29.99, In Stock), Product(Headset, $149.99, In Stock)]

Using None as the filter function

If you pass None as the function, filter() removes all falsy values from the iterable.

mixed = [0, 1, "", "hello", None, True, False, [], [1, 2], {}, {"key": "val"}]

truthy_values = list(filter(None, mixed))

print(truthy_values)

# Output: [1, 'hello', True, [1, 2], {'key': 'val'}]

This is a clean way to strip out empty strings, zeros, None values, and empty collections in one shot.

filter() vs List Comprehension

numbers = [1, 2, 3, 4, 5, 6, 7, 8, 9, 10] # Using filter evens_filter = list(filter(lambda x: x % 2 == 0, numbers)) # Using list comprehension evens_comp = [x for x in numbers if x % 2 == 0] # Both produce: [2, 4, 6, 8, 10]

The list comprehension is arguably more readable here. But filter() shines when you already have a named predicate function — list(filter(is_valid_email, emails)) reads almost like English.

reduce()

Syntax

from functools import reduce reduce(function, iterable[, initializer])

reduce() applies a function of two arguments cumulatively to the items in an iterable, from left to right, reducing the iterable to a single value. Unlike map() and filter(), reduce() is not a built-in — you must import it from the functools module.

Here is how it works step by step:

from functools import reduce numbers = [1, 2, 3, 4, 5] # Step-by-step: reduce(lambda a, b: a + b, [1, 2, 3, 4, 5]) # Step 1: a=1, b=2 -> 3 # Step 2: a=3, b=3 -> 6 # Step 3: a=6, b=4 -> 10 # Step 4: a=10, b=5 -> 15 total = reduce(lambda a, b: a + b, numbers) print(total) # Output: 15

Example 1: Summing Numbers

from functools import reduce

# Sum of all numbers

numbers = [10, 20, 30, 40, 50]

total = reduce(lambda acc, x: acc + x, numbers)

print(f"Sum: {total}")

# Output: Sum: 150

# Of course, Python has a built-in sum() for this.

# But reduce() generalizes to any binary operation.

print(f"Sum (built-in): {sum(numbers)}")

# Output: Sum (built-in): 150

Example 2: Finding the Maximum Value

from functools import reduce

numbers = [34, 12, 89, 45, 67, 23, 91, 56]

maximum = reduce(lambda a, b: a if a > b else b, numbers)

print(f"Maximum: {maximum}")

# Output: Maximum: 91

minimum = reduce(lambda a, b: a if a < b else b, numbers)

print(f"Minimum: {minimum}")

# Output: Minimum: 12

Again, Python has max() and min() built-ins for this. But this demonstrates the pattern: reduce() compresses a collection by repeatedly applying a binary operation.

Example 3: Flattening a List of Lists

from functools import reduce nested = [[1, 2, 3], [4, 5], [6, 7, 8, 9], [10]] flattened = reduce(lambda acc, lst: acc + lst, nested) print(flattened) # Output: [1, 2, 3, 4, 5, 6, 7, 8, 9, 10]

This works because the + operator concatenates lists. The accumulator starts with [1, 2, 3], then appends [4, 5] to get [1, 2, 3, 4, 5], and so on.

Example 4: Building a String from Parts

from functools import reduce

words = ["Python", "is", "a", "powerful", "language"]

sentence = reduce(lambda acc, word: acc + " " + word, words)

print(sentence)

# Output: Python is a powerful language

# In practice, you would use str.join() for this:

print(" ".join(words))

# Output: Python is a powerful language

The Initializer Parameter

The optional third argument to reduce() is the initializer. It serves as the starting value for the accumulation and is used as the default if the iterable is empty.

from functools import reduce

# Without initializer - fails on empty list

try:

result = reduce(lambda a, b: a + b, [])

except TypeError as e:

print(f"Error: {e}")

# Output: Error: reduce() of empty sequence with no initial value

# With initializer - returns the initializer for empty list