AWS – SQS

SQS is a messaging system similar to ActiveMQ or RabbitMQ. It is a temporary repository for messages that need to be processed but the server is busy processing other messages. SQS is fully managed by AWS. You don’t have to manage middleware or servers. Using SQS, you can send, store, and receive messages between software components at any volume, without losing messages or requiring other services to be available.

SQS is pull-based and not push-based. Messages can live in the queue from 1 minute to 14 days. The default retention period is 4 days.

Visibility timeout is the amount of time that a message is invisible while processing. If a message is not processed between the visibility timeout then it will become available again for another reader to pick up and process. This can result in one message being processed more than once. Default visibility timeout is 30 seconds. Maximum visibility timeout is 12 hours. Increase your visibility timeout according to your needs.

Messages can contain up to 256KB of text in any format. SQS solves the issue where messages or events are more than what the server can process. It will store the messages and hand them over to the server when the server is ready for them.

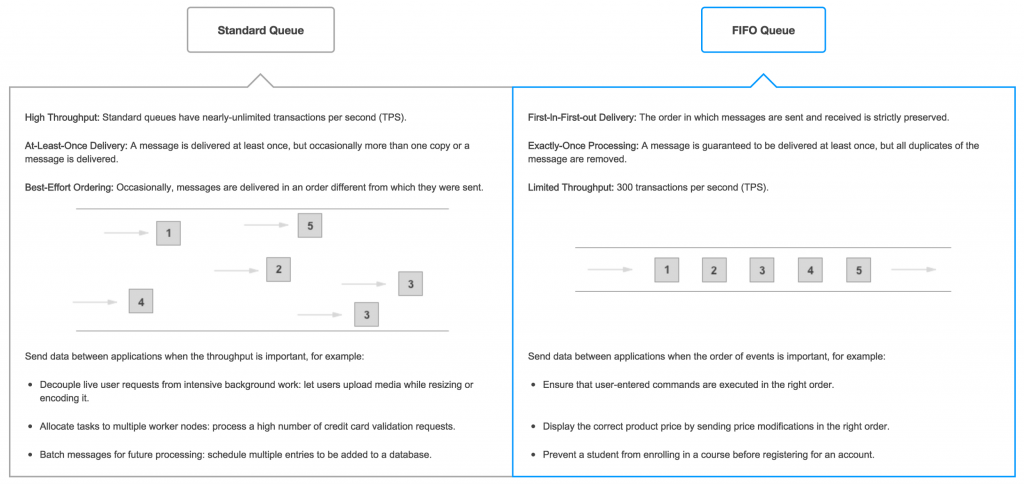

There are two queue types which are Standard Queues and FIFO Queues.

Standard Queue

Standard queues guarantee that a message is delivered once but occasionally a message might get delivered twice. Messages within standard queues are generally delivered in the order they are sent.

You can set delay seconds which tells SQS to wait for a certain amount of seconds before exposing the message to the readers.

Standard queues can serve unlimited number of messages.

FIFO Queue

FIFO which stands for first-in-first-out delivers and processes messages in the order they are sent. There are no duplicates for this type of queue.

FIFO queues support up to 3,000 messages per second with batching , or up to 300 messages per second (300 send, receive, or delete operations per second) without batching. You can submit a support ticket to AWS to increase this limit.

MessageGroupId groups messages that belong together and is processed in a FIFO manner. Other messages that don’t have the same messageGroupId might not be in order.

MessageDeduplicationId is used to make sure messages are unique and that messages are not sent into the queue multiple times. If a message with a particular MessageDeduplicationId is sent successfully, any messages sent with the same MessageDeduplicationId are accepted successfully but aren’t delivered during the 5-minute deduplication interval.

DelaySeconds attribute can only be set on the queue level for FIFO.

Long Polling

Long polling doesn’t return a response unless there is a message to process or the long polling timeouts. Long polling helps you save money as your API might make less frequent calls to the AWS SQS API. The recommended timeout is 20 seconds but can be more. The longer the timeout the less likely your API call will return an empty response which more like will result in saving resources.

Using SQS Locally

At the time of writing, I have not found a way that works consistently to run SQS on my local machine. I have tried many approaches people put on the internet but none worked for me. For local development, I use a set of queues different from the ones I use for other environments like Dev, QA, or production. This has been the best solution for me. If you find a solution that works for you please leave a link in the comment section.

Spring project example for sending an email after a user has signed up on your application.

@Bean

public AWSCredentialsProvider amazonAWSCredentialsProvider() {

return new ProfileCredentialsProvider("folauk100-dev");

}

@Bean

public AmazonSQS amazonSQSMain() {

AmazonSQS sqs = AmazonSQSClientBuilder.standard().withCredentials(amazonAWSCredentialsProvider())

.withRegion(Regions.US_WEST_2).build();

return sqs;

}

How to create both FIFO queue and Standard queue

public String createQueue(String name) {

CreateQueueRequest createQueueRequest = new CreateQueueRequest().withQueueName(name);

CreateQueueResult result = amazonSQS.createQueue(createQueueRequest);

log.debug("queue url: " + result.getQueueUrl());

log.debug("queue: " + result.toString());

return result.getQueueUrl();

}

/**

* Set delaySeconds if needed as it would be able to do at message sending.

* @param name

* @return

*/

public String createFIFOQueue(String name) {

final Map<String, String> attributes = new HashMap<>();

attributes.put("FifoQueue", "true");

attributes.put("ContentBasedDeduplication", "true");

CreateQueueRequest createQueueRequest = new CreateQueueRequest()

.withQueueName(name + ".fifo")

.withAttributes(attributes);

CreateQueueResult result = amazonSQS.createQueue(createQueueRequest);

log.debug("queue url: " + result.getQueueUrl());

log.debug("queue: " + result.toString());

return result.getQueueUrl();

}

Send messages to a queue

public boolean sendMessage(String queueUrl, SQSMessage msg, int delaySeconds, Map<String, MessageAttributeValue> messageAttributes) {

if(msg==null) {

throw new RuntimeException("msg is empty");

}

SendMessageRequest sendMessageRequest = new SendMessageRequest();

sendMessageRequest.withMessageBody(msg.toJson());

sendMessageRequest.withQueueUrl(queueUrl);

if(messageAttributes!=null) {

log.debug("messageAttributes set!");

sendMessageRequest.withMessageAttributes(messageAttributes);

}

if(delaySeconds>0) {

sendMessageRequest.withDelaySeconds(delaySeconds);

}

log.debug("messageAttributes={}",ObjectUtils.toJson(sendMessageRequest.getMessageAttributes()));

SendMessageResult sendMessageResult = amazonSQS.sendMessage(sendMessageRequest);

String resultId = sendMessageResult.getMessageId();

log.info("resultId={}", resultId);

return true;

}

Receive messages

@Async

public void processAccountQueue() {

int waitingSeconds = 10;

String queueUrl = SQSQueue.ACCOUNTS_QUEUE_URL;

final ReceiveMessageRequest receiveMessageRequest = new ReceiveMessageRequest(queueUrl);

receiveMessageRequest.withWaitTimeSeconds(waitingSeconds);

while (true) {

final List<Message> messages = amazonSQS.receiveMessage(receiveMessageRequest).getMessages();

log.debug("polling {}", queueUrl);

if (messages != null && messages.size() > 0) {

for (final Message message : messages) {

log.debug("Message Received");

SQSMessage sqsMessage = SQSMessage.fromJson(message.getBody());

int retryCount = 0;

while (true) {

try {

handleAccountMessage(sqsMessage);

break;

} catch (Exception e) {

if (retryCount == 5) {

break;

}

log.debug("retryCount={}", retryCount);

retryCount++;

}

}

// delete message once it's done

DeleteMessageResult deleteMessageResult = amazonSQS

.deleteMessage(new DeleteMessageRequest(queueUrl, message.getReceiptHandle()));

log.debug("delete message response={}",

ObjectUtils.toJson(deleteMessageResult.getSdkResponseMetadata()));

}

} else {

log.debug("{} is empty", queueUrl);

}

}

}

aws sqs list-queues --profile {profile-name}

aws sqs receive-message --queue-url {queue-url} --profile {profile-name} --attribute-names All --message-attribute-names All --max-number-of-messages 10

Empty all messages from a queue

aws sqs purge-queue --queue-url {queue-url} --profile {profile-name}

aws sqs send-message --queue-url {queue-url} --message-body "test body." --delay-seconds 10 --message-attributes file://message.json

aws sqs delete-message --queue-url {queue-url} --receipt-handle {messageReceiptHandle}

Immediately after a message is received, it remains in the queue. To prevent other consumers from processing the message again, Amazon SQS sets a visibility timeout, a period of time during which Amazon SQS prevents other consumers from receiving and processing the message. The default visibility timeout for a message is 30 seconds. The minimum is 0 seconds. The maximum is 12 hours.

aws sqs change-message-visibility --queue-url {queue-url} --receipt-handle {messageReceiptHandle} --visibility-timeout 36000

If there are two readers or listeners listening to a particular queue, only one of the readers can process a message from the queue at any given time.

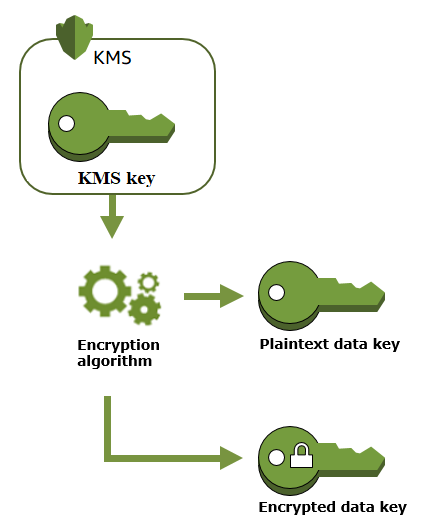

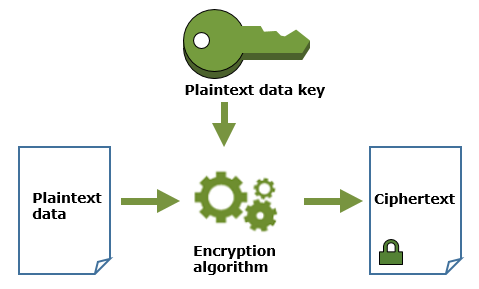

AWS – KMS and Ecryption

AWS Key Management Service (KMS) makes it easy for you to create and manage keys and control the use of encryption across a wide range of AWS services and in your applications. AWS KMS is a secure and resilient service that uses hardware security modules that have been validated under FIPS 140-2, or are in the process of being validated, to protect your keys. AWS KMS is integrated with AWS CloudTrail to provide you with logs of all key usage to help meet your regulatory and compliance needs.

You can perform the following management actions on your AWS KMS master keys:

- Create, describe, and list master keys

- Enable and disable master keys

- Create and view grants and access control policies for your master keys

- Enable and disable automatic rotation of the cryptographic material in a master key

- Import cryptographic material into an AWS KMS master key

- Tag your master keys for easier identification, categorizing, and tracking

- Create, delete, list, and update aliases, which are friendly names associated with your master keys

- Delete master keys to complete the key lifecycle

With AWS KMS you can also perform the following cryptographic functions using master keys:

- Encrypt, decrypt, and re-encrypt data

- Generate data encryption keys that you can export from the service in plaintext or encrypted under a master key that doesn’t leave the service

- Generate random numbers suitable for cryptographic applications

AWS – Alexa

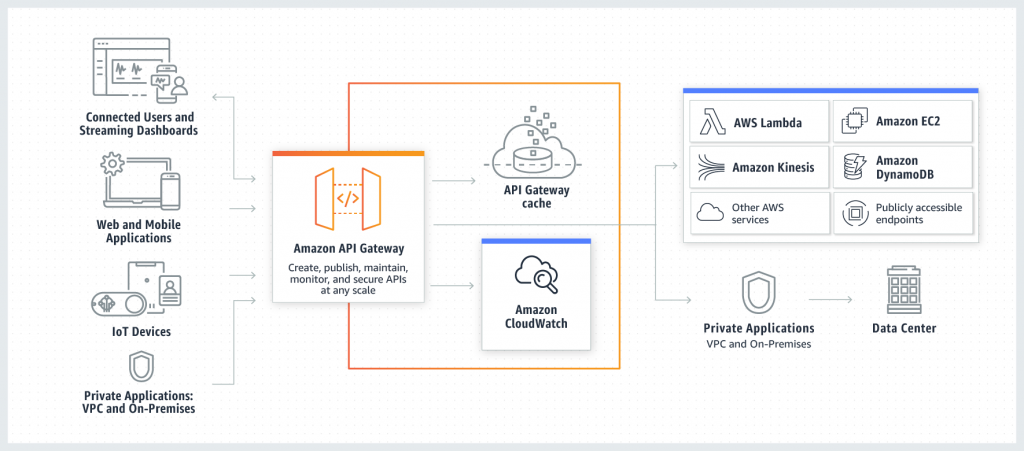

August 5, 2019AWS – API Gateway

Amazon API Gateway is a fully managed service that makes it easy for developers to create, publish, maintain, monitor, and secure APIs at any scale. API Gateway handles all the tasks involved in accepting and processing up to hundreds of thousands of concurrent API calls, including traffic management, authorization and access control, monitoring, and API version management. API Gateway has no minimum fees or startup costs. You pay only for the API calls you receive and the amount of data transferred out and, with the API Gateway tiered pricing model, you can reduce your cost as your API usage scales.

AWS API Gateway Developer Guide

August 5, 2019AWS – Lambda

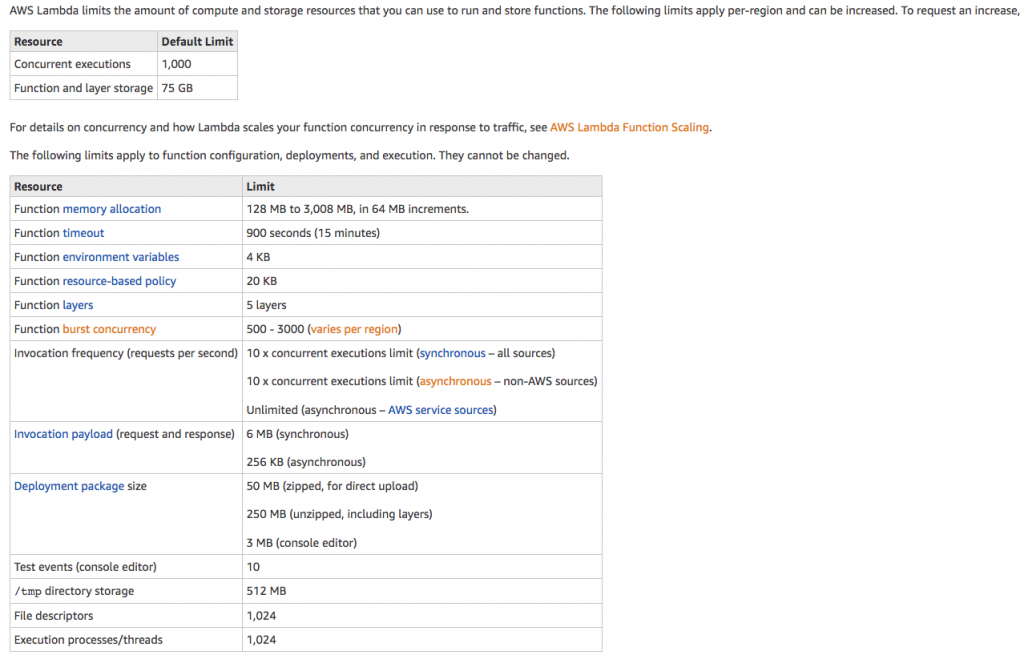

AWS Lambda lets you run code without provisioning or managing servers. You pay only for the compute time you consume – there is no charge when your code is not running. With Lambda, you can run code for virtually any type of application or backend service – all with zero administration. Just upload your code and Lambda takes care of everything required to run and scale your code with high availability. You can set up your code to automatically trigger from other AWS services or call it directly from any web or mobile app.

You can use AWS Lambda to run your code in response to events, such as changes to data in an Amazon S3 bucket or an Amazon DynamoDB table; to run your code in response to HTTP requests using Amazon API Gateway; or invoke your code using API calls made using AWS SDKs. With these capabilities, you can use Lambda to easily build data processing triggers for AWS services like Amazon S3 and Amazon DynamoDB, process streaming data stored in Kinesis, or create your own back end that operates at AWS scale, performance, and security.

When to use Lambda?

- When you just need to focus on your application and not maintain servers which most companies would like to do

- When using AWS Lambda, you are responsible only for your code. AWS Lambda manages the compute fleet that offers a balance of memory, CPU, network, and other resources. This is in exchange for flexibility, which means you cannot log in to compute instances, or customize the operating system or language runtime.

- Maximum execution duration per request is set to 300 seconds.

- Lambda function deployment package size, which is set to 50MB (compressed), and the non-persistent scratch area available for the function to use – 500MB.

- The cold start takes some time for the Lambda function to handle the first request because Lambda has to start a new instance of the function. The cold start can be a real problem for your function if at any time you expect fast response and there are no active instances of your function. The latter can happen for low traffic scenarios when AWS terminates instances of your function when there have been no requests for a long time. One workaround is to send a request periodically to avoid the cold start and to make sure that there is always an active instance, ready to serve requests.

- Lambda functions write their logs to CloudWatch, which currently is the only tool to troubleshoot and monitor your functions.

- Lambda functions are short-lived, therefore they need to persist their state somewhere. Available options include using DynamoDB or RDS tables, which require fixed payments per month.

- You can invoke AWS Lambda functions over HTTPS. You can do this by defining a custom REST API and endpoint using Amazon API Gateway, and then mapping individual methods, such as

GETandPUT, to specific Lambda functions. Alternatively, you could add a special method named ANY to map all supported methods (GET,POST,PATCH,DELETE) to your Lambda function. When you send an HTTPS request to the API endpoint, the Amazon API Gateway service invokes the corresponding Lambda function. - Amazon API Gateway invokes your function synchronously with an event that contains details about the HTTP request that it received.

- Amazon API Gateway also adds a layer between your application users and your app logic that has the bility to throttle individual users or requests, protect against Distributed Denial of Service attacks. and provide a caching layer to cache response from your Lambda function.

- Amazon API Gateway cannot invoke your Lambda function without your permission. You grant this permission via the permission policy associated with the Lambda function. Lambda also needs permission to call other AWS services like S3 or DynamoDB.

- An Amazon API Gateway is a collection of resources and methods. For this tutorial, you create one resource (

DynamoDBManager) and define one method (POST) on it. The method is backed by a Lambda function (LambdaFunctionOverHttps). That is, when you call the API through an HTTPS endpoint, Amazon API Gateway invokes the Lambda function. - Pass through the entire request – A Lambda function can receive the entire HTTP request (instead of just the request body) and set the HTTP response (instead of just the response body) using the

AWS_PROXYintegration type. - Catch-all methods – Map all methods of an API resource to a single Lambda function with a single mapping, using the

ANYcatch-all method. - Catch-all resources – Map all sub-paths of a resource to a Lambda function without any additional configuration using the new path parameter (

{proxy+}).

outputType handler-name(inputType input, Context context) {

…

}

import com.amazonaws.services.lambda.runtime.Context;

import com.amazonaws.services.lambda.runtime.RequestHandler;

public class HelloPojo implements RequestHandler<RequestClass, ResponseClass>{

public ResponseClass handleRequest(RequestClass request, Context context){

String greetingString = String.format("Hello %s, %s.", request.firstName, request.lastName);

return new ResponseClass(greetingString);

}

}